Generative AI systems now influence students in ways that are not meaningfully observable, reconstructable, or accountable to any responsible adult or institution.

This is not a question of whether AI is useful or not; it is whether institutional responsibility remains clearly defined and enforceable when such systems interact directly with students.

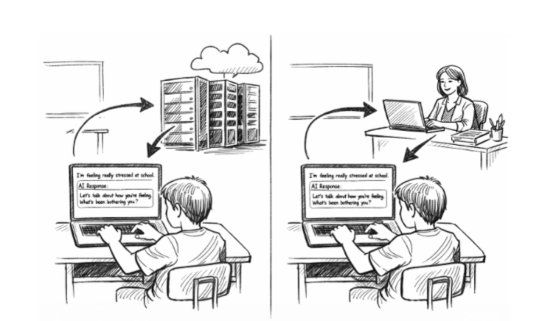

The child stays; only the source of the response changes. When the responder shifts from a licensed adult to a probabilistic system, what binds responsibility to the output?

The Institutional Accountability Framework (IAF) evaluates whether conditions such as these are present:

Observability (can you see / reconstruct)

Accountability (who is responsible)

Bounded operation (where / how is it used)

Institutional Backing (liability / insurance)